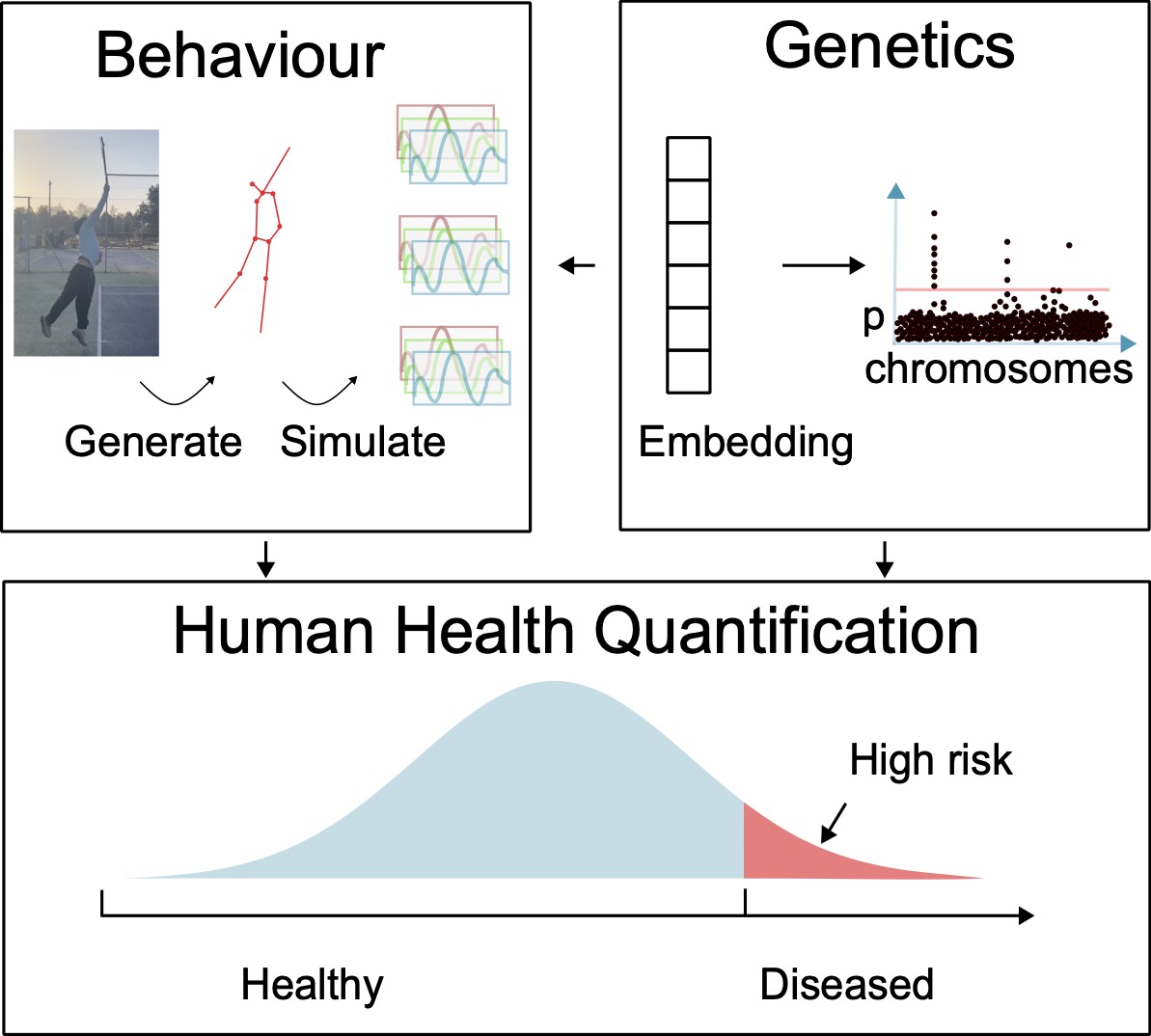

I develop machine learning methods that learn meaningful representations from wearable biosignals to understand human behaviour and improve human health at scale. My work focuses on three levels of representation learning of human behaviour:

- Low-level movement representations that generalise across activities and populations,

- High-level behavioural representations that compress long-term wearable time series for biological and genomic discovery, and

- Multi-modal health representations that integrate biosignals with genetic and physiological data to quantify health along a continuum.

Using self-supervised and data-driven approaches, I build foundation models for wearable data that reduce reliance on labels while improving robustness and real-world applicability. My goal is to turn continuous human sensing into a scalable, interpretable foundation for preventive and precision health.

I daydream of a future where wearable sensing and genetic data can be used to personalise individual treatment plans for all.

My doctoral research was supervised by Aiden Doherty and Simon Kyle, which has been generously sponsored by Novo Nordisk. I also worked with Andrew Zisserman and Timor Kadir for my rotation project. Previously I worked with Herbert Jaeger and Benn Godde on echo state neural networks for my undergraduate thesis. My master’s thesis was supervised by François Fleuret and Mathieu Salzmann.

I enjoy playing tennis and bounldering outside of work. At times, I also join the other side and use my organic intelligence to augment aritificial intellgience at startups like Lume Health.

Finally, I am always looking forward motivated students to do projects with me. If you would like to study with me, feel free to email me with your interests, CV and code sample with a subject headline [apple].

Students

- 2026: Firas Rarwish (Oxford Stats-ML CDT rotation)

- 2025: Kyra Edwards (Oxford HDS CDT rotation)

- 2024: Xinyi Cai (Oxford EngSci summer intern)

Selected publications

Self-supervised Learning for Human Activity Recognition Using 700,000 Person-days of Wearable Data

Hang Yuan, Shing Chan, Andrew P. Creagh, Catherine Tong, David A. Clifton, Aiden Doherty

npj Digital Medicine, 2024

Paper Code Web

Self-supervised learning of accelerometer data provides new insights for sleep and its association with mortality

Hang Yuan, Tatiana Plekhanova, Rosemary Walmsley, Amy C. Reynolds, Kathleen J. Maddison, Maja Bucan, Philip Gehrman, Alex Rowlands, David W. Ray, Derrick Bennett, Joanne McVeigh, Leon Straker, Peter Eastwood, Simon D. Kyle, Aiden Doherty

npj Digitial Medicine, 2024

Paper Code Web

Digital health technologies and machine learning augment patient-reported outcomes to remotely characterise rheumatoid arthritis

Andrew P. Creagh, Valentin Hamy, Hang Yuan, Gert Mertes, Ryan Tomlinson, Wen-Hung Chen, Rachel Williams, Christopher Llop, Christopher Yee, Mei Sheng Duh, Aiden Doherty, Luis Garcia-Gancedo, David A. Clifton

npj Digital Medicine, 2024

Paper Code Web